Time and Space Complexities¶

So, when someone refers to either “time” or “space” complexities, they are talking about “Big-O notation”. It’s a way of writing down how much an algorithm scales in either the time it takes to process, or the amount of memory it takes up, with respect to the number of elements given to the algorithm.

For example, to count the number of apples in an orchard, it takes O(n) time, where “n” is the number of apples. It also takes O(1) space, because we aren’t storing any apples to count them, so no space is taken up (1 is just a default number). There is an “O()” around these to indicate this is how something scales, hence the name “Big-O notation”.

Here’s another example, in code this time: there is a for loop inside of a for loop that does something for

each item in an array (both loops). The first loop iterates “n” times, where “n” is the number of items in the array.

However, the inner for loop also iterates “n” times. So, the total number of times the inner loop iterates is “n * n”, or “n^2”

(“n” squared). The time complexity for this operation would then be written as O(n^2) time. The same reasoning applies

for space complexity - if this operation stored all items in the array into “n” arrays, the space complexity would be

O(n^2) as well.

A few other things to note with Big-O notation:

Constants are dropped (there is no O(5 * n), only O(n)).

O(log(n)) refers to operations that are not constant (not O(1)) but still scale substantially less than O(n).

Formally, O(log(n)) refers to operations that halve the time to completion each time “n” increases.

O(n * log(n)) refers to the above, but multiplied by “n”.

O(1) refers to “constant” operations that do not scale with “n” but are always the same.

All algorithms and structures in the Sorting and Structures sections are marked with these.

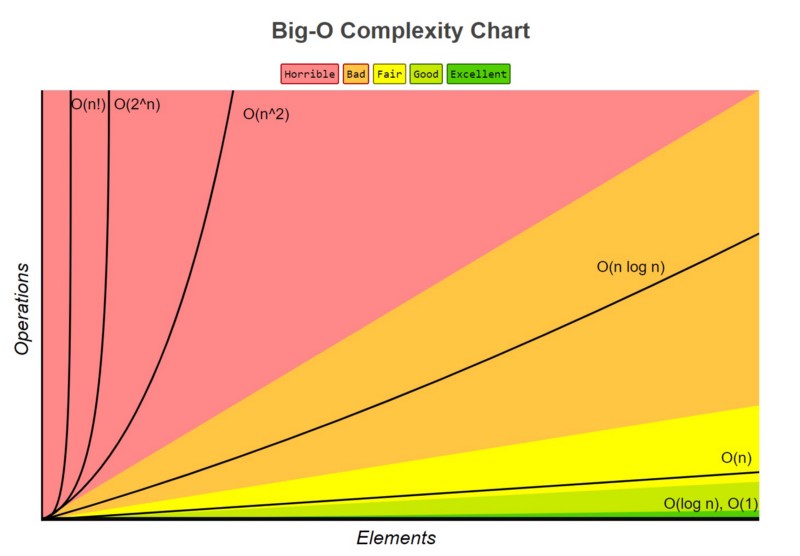

Here is a diagram of how these things scale:

Here is a website summarizing these complexities in common algorithms.